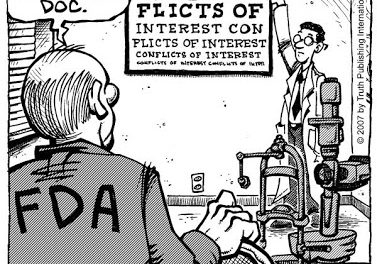

The fact that studies with negative results can be ignored —not published in the literature or reported to the regulatory authorities at the discretion of the studies’ sponsors— is the most blatant bias in capital-S Science and capital-M medicine. Some major types of bias are neatly defined in an essay by Dr. Aaron Carroll that ran in the NY Times Sept 25:

Publication bias refers to the decision on whether to publish results based on the outcomes found. With the 105 studies on antidepressants, half were considered “positive” by the F.D.A., and half were considered “negative.” Ninety-eight percent of the positive trials were published; only 48 percent of the negative ones were.

Outcome reporting bias refers to writing up only the results in a trial that appear positive, while failing to report those that appear negative. In 10 of the 25 negative studies, studies that were considered negative by the F.D.A. were reported as positive by the researchers, by switching a secondary outcome with a primary one, and reporting it as if it were the original intent of the researchers, or just by not reporting negative results.

Spin refers to using language, often in the abstract or summary of the study, to make negative results appear positive. Of the 15 remaining “negative” articles, 11 used spin to puff up the results. Some talked about statistically nonsignificant results as if they were positive, by referring only to the numerical outcomes. Others referred to trends in the data, even though they lacked significance. Only four articles reported negative results without spin.

Spin works. A randomized controlled trial found that clinicians who read abstracts in which nonsignificant results for cancer treatments were rewritten with spin were more likely to think the treatment was beneficial and more interested in reading the full-text article.

It gets worse. Research becomes amplified by citation in future papers. The more it’s discussed, the more it’s disseminated both in future work and in practice. Positive studies were cited three times more than negative studies. This is citation bias.

Note to Dr. Carroll:

Thanks for the excellent essay in the Times. (Whoever wrote the inane online headline should be horsewhipped.) When you revisit the topic you might point out that the criteria for publication in “the literature” contain inherent biases. One that seems indefensible, from a scientific POV, is that a paper will be disqualified because some of the findings have been published elsewhere. O’Shaughnessy’s had to spike an excellent article recounting the research of Dr. David Meiri because Meiri hopes Cell will accept a paper reporting his findings. Why should it make any difference to the editors of Cell if a tabloid read by doctors and patients runs a piece about Meiri that includes some of his data? Why is the scoop so important? All that should matter is the accuracy of the data!